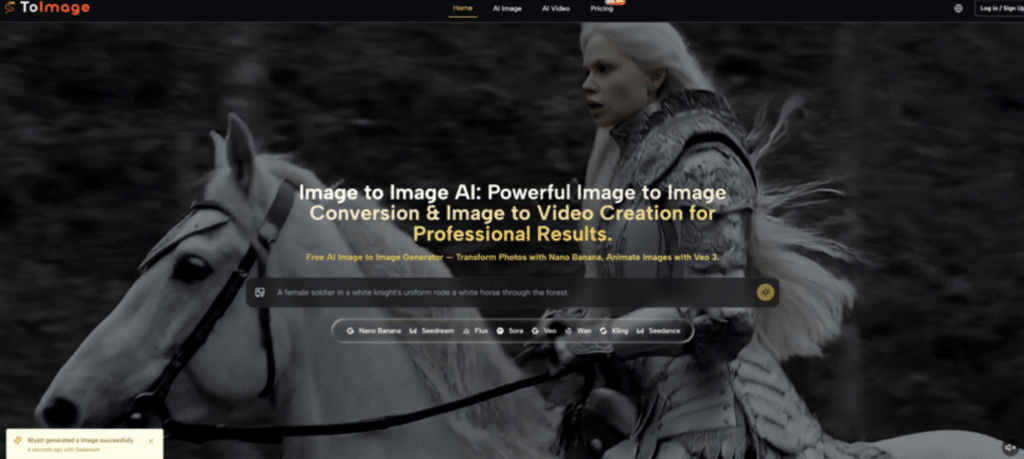

Creating a cohesive and constantly engaging visual identity across multiple digital platforms can often feel like an endless treadmill for modern creators and marketing teams. You might manage to capture a genuinely great core photograph during a session, but adapting that single asset to fit the moody, atmospheric aesthetic of a specific seasonal campaign, or transforming it into an engaging short-form video clip, typically drains hours of manual editing time. This constant pressure to produce fresh material often leads directly to creative burnout. However, adopting a modern Image to Image workflow can dramatically alleviate this operational friction. Instead of endlessly tweaking adjustment layers and complex masks, we can now use sophisticated generation models to interpret our base images and output professional-grade variations almost instantly, breathing entirely new life into older or simpler assets.

The beauty of this technology lies in its accessibility and its ability to act as a multiplier for your existing creativity. You no longer need to be a master of complex visual effects software to execute a high-level concept. If you can clearly articulate the mood, the lighting, and the environment you want to see, the processing models can translate that vision into reality based on the structural foundation of your uploaded photo. This allows creators to experiment with bold, unconventional artistic directions that they might otherwise avoid due to the sheer time investment required to create them manually from scratch.

Diving Into the Leading Visual Generation Models

As you begin to integrate this methodology into your regular content pipeline, it becomes clear that selecting the right processing architecture is just as important as writing a good prompt. The platform hosts a variety of industry-leading models, each trained with a distinct philosophy regarding how visual data should be interpreted and rendered. Some prioritize absolute photorealism, while others lean heavily into cinematic storytelling or lightning-fast iteration speeds.

Navigating these options might seem slightly overwhelming at first, but viewing them as different tools in a well-equipped workshop helps clarify the process. By understanding the specific technical biases and strengths of engines like Seedream, Sora, and Veo, you can strategically route your creative tasks to the model that is naturally predisposed to give you the best possible result with the least amount of friction.

Rapid Asset Creation Powered by Seedream Technology

When dealing with the relentless demands of daily social media posting or rapid A/B testing for digital advertisements, speed becomes a critical factor. In my experience, the Seedream models are exceptionally well-suited for high-volume workflows. While other models might take longer to calculate the perfect light dispersion for a hyper-realistic portrait, Seedream prioritizes getting a high-quality, artistically versatile output onto your screen as quickly as possible.

This rapid iteration cycle is incredibly freeing. It allows you to quickly test out ten completely different stylistic prompts on a single base image in the time it would normally take to generate just one highly detailed render. If you are exploring whether a product looks better surrounded by a futuristic cyberpunk cityscape or a serene, minimalist Zen garden, Seedream provides the fastest path to visualizing those concepts side-by-side.

Balancing High Speed Production with Artistic Quality

A common concern with high-speed generation is a potential drop in final visual fidelity. However, the current iteration of these rapid-response models maintains a surprisingly high professional standard. They are particularly adept at handling stylized transformations, such as converting a standard photograph into a rich oil painting, a vibrant anime still, or a soft watercolor illustration. While they might occasionally gloss over the microscopic details of skin pores compared to the Nano Banana Pro architecture, their strength lies in capturing the overall emotional tone and artistic flair quickly, making them indispensable for trend-responsive content creation.

Cinematic Storytelling Driven by the Sora Architecture

When the goal shifts from creating a beautiful static image to telling a compelling visual story, the Sora architecture represents a massive leap forward. In my observation, this model possesses a unique understanding of cinematic language—camera angles, focal depth, and narrative pacing. When you upload a base image to Sora, it does not just animate the pixels; it attempts to understand the implicit story within the frame and extends that narrative forward in time.

If you provide a photo of a lone figure standing on a misty cliff edge, Sora excels at generating a sweeping, drone-like camera movement that slowly pushes in, while the mist rolls naturally over the rocks. The emotional resonance achieved by this model is profound, making it an excellent choice for creating short brand films or atmospheric mood boards. A minor limitation to keep in mind is that highly complex, multi-character interactions might sometimes exhibit slight continuity glitches, so focusing on strong, centralized subjects usually yields the most breathtaking cinematic results.

Understanding the Native Audio Generation in Veo

While Sora focuses heavily on the visual cinematic experience, the Veo architecture brings another crucial dimension to the table: integrated sound. Typically, generating an AI video means you are left with a silent clip that requires an entirely separate workflow to find and sync appropriate sound effects and background music. Veo fundamentally changes this by generating a synchronized audio track natively alongside the video frames.

If your base image features a bustling city street and you ask the model to animate it, it will simultaneously generate the ambient sounds of distant traffic, footsteps, and urban hum. This cohesive generation process ensures that the audio perfectly matches the physics and pacing of the visual movement. It saves a tremendous amount of post-production time and allows creators to preview the full, immersive impact of their generated asset immediately within the platform interface.

Following the Standard Process for Asset Creation

To maintain a smooth and predictable workflow, especially when experimenting with various powerful models, it is best to stick to the established operational steps designed by Image to Image AI.

- Upload the base image you wish to transform or animate into the generation interface, ensuring it clearly displays the main subject.

- Choose your generation model from the available suite, selecting based on whether you need rapid styling, hyper-realism, or cinematic video animation.

- Review the output options generated by the AI and utilize the platform tools to compare results, selecting the one that perfectly aligns with your project goals.

Evaluating Technical Specifications of Processing Engines

To streamline your decision-making process during production, here is a concise overview comparing the fundamental strengths of the key models discussed, helping you choose the right tool for the job.

| Generation Architecture | Core Operational Strength | Best Professional Use Case |

| Seedream Models | Lightning Fast Iteration | High Volume Social Content |

| Sora Architecture | Cinematic Camera Movement | Narrative Brand Storytelling |

| Veo Three Engine | Integrated Native Audio | Immersive Multimedia Assets |

| Nano Banana Series | Uncompromising Detail | High End Commercial Imagery |